Taking a Deep Dive into Deep Learning

January 8th, 2021

Are you interested in learning about Deep Learning and its applications? In this blog, we will go over deep learning, reasons for its popularity and some of its applications in different industries.

My Journey in Deep learning:

I came across Deep learning and its applications while working on research projects during my undergrad. It was daunting and overwhelming initially with so many jargons like AI, Deep learning, Machine learning and Big data. I was really fascinated by the various applications and I wanted to get some hands-on experience that can help me get started in the field of Data Science, a field of data sets, data analysis and data visualizations.

I came across the Global Academic Internship Program (GAIP) organized by Corporate Gurukul along with National University Singapore (NUS) and Hewlett Packard Enterprise (HPE) which provided the opportunity to work on Data Science projects, using raw data.

When I got admitted for this internship during the junior year of my undergrad, I had just python and basic Linux experience. The coursework and projects on Big Data Analytics helped to gain insights into deep learning and its applications which motivated me to pursue my Masters with Data Science specialization.

The in and outs of Deep Learning

Deep Learning is a part of Machine learning algorithms based on Artificial Neural Networks which are inspired by neurons in the human brain. Deep learning is used for a wide range of applications like Object detection, Natural language understanding, computer vision, Speech Recognition and Self-driving cars with many frameworks and libraries available for it.

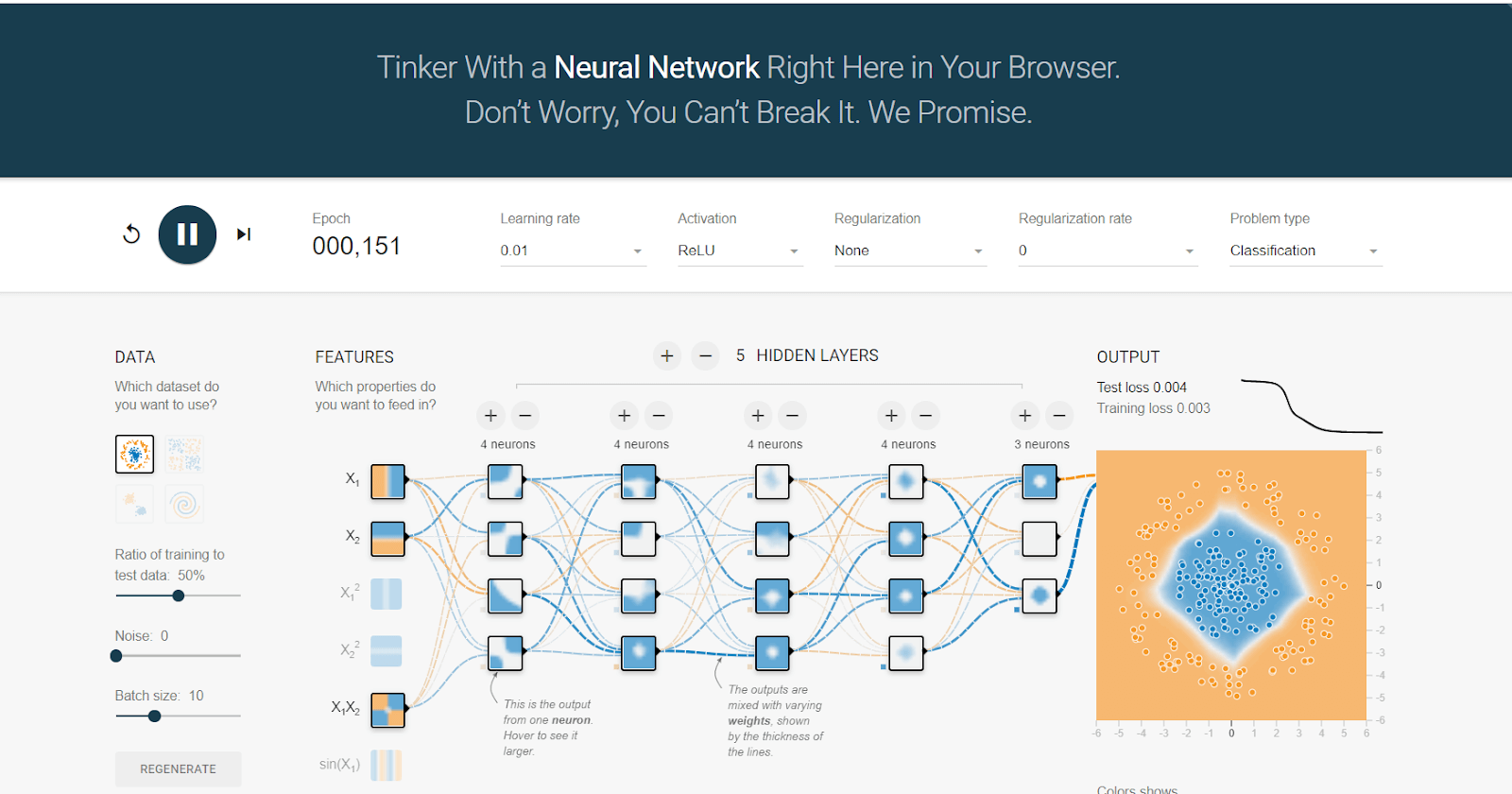

Deep learning algorithms are usually trained for multiple iterations with huge amounts of data directly without any feature extraction. You can play with neural networks to identify and distinguish the patterns in data using Tensorflow playground which runs on the browser!

As you can see in this example when we click the run option, we have a neural network which tries to separate the blue and orange points in the data which uses some features as input data to predict the category of the data point with many layers in between input and output.

Neural Networks consists of these multiple layers which allow the model to comprehend the complete data representation. Most of the state-of-the-art models contain large numbers of hidden layers which are used to process a single feature from the input data. They can be used for both the supervised learning where data is available for required output given the input data and unsupervised learning where only input data points are available, and formulate a strategy around business intelligence.

Deep Artificial Neural Networks

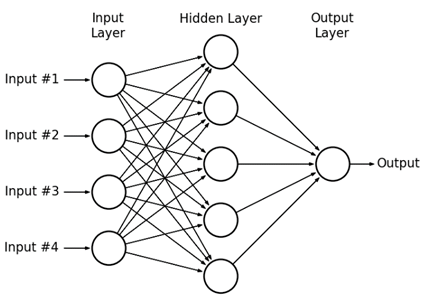

Artificial Neural Network (ANN) is made up of multiple nodes linked together which is inspired by Neuron Structure in the human brain. The nodes take input data and perform specific functions which transform the input to pass on as output to another node. The large number of layers in the network makes it “Deep”. ANN consists of an input layer, the hidden layers, and an output layer.

Figure: Example of Simple Feed Forward ANN

Input layer nodes take in the input features from the dataset which is linked to all the hidden layer nodes through some edges which contain “weights”. These weights can be considered as an important factor which decides which of these input nodes matter more. Hidden layer nodes perform some set of operations (activation function) on the weighted sum of the input nodes. Output layer takes in the weighted sum of hidden layer nodes and then transforms it to the required output.

These weights are learnt in the network by the ANN by iterating the dataset multiple times after which the weights can be used to predict the output from the given input data. As in the simple Feed Forward ANN, neural networks can contain multiple numbers of these hidden layers which makes it “Deep Neural Network”.

Building Deep Learning

Deep learning has become more popular than other machine learning algorithms as it outperforms them in most of the supervised learning tasks and has rolled hand in hand with artificial intelligence. With an abundance of data, availability of high-performance computing systems, and GPUs for parallel processing makes it the easy choice.

Over the past few years, the amount of data being generated has been increasing exponentially, especially in the form of unstructured data like images, videos and text. With enormous data available, it becomes essential to explore better approaches to make sense of it.

It even acts as the pedestal to becoming data analysts or a data scientist. You may have seen the stop signs along the way of working on the neural network architectures, but the only way is to persevere and work on the amount of data you have to communicate results.

An important property of deep neural networks is that the results get better with More Data, More computation power and bigger models. With increasingly cheaper data storage and computation costs, deep learning algorithms can be trained with large cloud GPUs or custom parallel processing devices that can handle a lot of training data.

These algorithms use a lot of complex mathematical operations to find an approximation function to inputs. For example, we want to identify dogs and cats in images, the neural network needs to be trained with a lot of images such that it stores a representation of the cat and dog images as “weights” in the network which can be used to distinguish between cats and dogs.

Slides: Andrew Ng

Deep Learning Use Cases:

1. Health Care:

Deep learning algorithms like Convolutional Neural Networks (CNNs) for Image recognition are well suited for detecting and diagnosing diseases, analyzing patterns in medical images like MRIs and X-rays. Drug prescription, drug discovery and patient data analytics are some of the applications in the health tech industry.

1. Marketing:

As deep learning algorithms do not require much feature extraction, they can be used to customize the advertisements using product recommendation systems, customer service chatbots and customer retention analysis. Ads bidding analysis can be done to reduce the costs while increasing the revenue through targeted ads.

1. Cyber Security:

Detecting malicious activity and anomaly in transactions can be done using deep learning algorithms.

1. Robots & Self driving cars:

Autonomous robots and self-driving cars can be trained to interpret visual inputs and process them for their tasks. Robots use deep learning algorithms for detecting real-world objects and interacting with the environment. Self-driving cars detect the road lanes, signs and avoid collision using object detection deep learning algorithms.

1. Fintech Industry & Banking Sector:

Detecting fraudulent transactions, predicting stock price trends, checking for loan/credit lending are some use cases in the Fintech Industry where deep learning algorithms can help given the large amounts of transactions data being generated every day.

1. Customer Service:

Customers prefer chatbots waiting to connect with customer service representatives. Building customer service Chatbots and Assistants that understand the concerns of customers can be done using language modelling and Natural language processing Deep learning algorithms.

1. Entertainment: Movies and TV shows streaming platforms use recommendation systems to improve customer discovery and experience. They use features like watch history, online behaviour to make suggestions accordingly. Deep learning algorithms can also be used for adding subtitles for movies and generating realistic images.

How to Get Started? - Deep Learning Frameworks:

Deep learning algorithms can be implemented using many Open-source libraries available in multiple programming languages like Python, R, JavaScript and Java. Python is most popular for deep learning due to the availability of many libraries and large open source communities. The following are some of the popular deep learning frameworks in Python:

1. Keras: Keras is an API that uses simple and easy to understand code to build deep neural networks. It has good documentation and can be integrated into other frameworks as a backend. Keras is the most recommended library for deep learning due to its easy implementation.

1. TensorFlow: TensorFlow is an open-source library, created by Google for Machine learning which consists of many tools to build neural networks and Tensorflow offers many high-level APIs that make it easy to not only train, test and deploy models to production environments but as well easy to learn for beginners. Tensorflow is based on graphs wherein data and operations are represented in the form of nodes and links in a graph and they need to be built and executed separately.

As of Tensorflow 2.0, Eager execution which allows execution directly instead of using graphs is enabled by default. Also, Keras is integrated into the library as tf.keras and which makes it a more compelling library to use for deep learning applications. Tensorflow offers tools like Tensorflow.js, TensorFlow lite which helps to deploy the mode on mobile and embedded edge devices as well.

1. Pytorch: Pytorch is an open-source library developed by Facebook. Pytorch is a preferred library for many researchers in academia as it’s more similar to the Python style of programming. Although currently, PyTorch lacks many tools like Tensorflow for deploying and testing, it has a growing community of developers who are building tools and libraries based on PyTorch like Fastai.

PyTorch vs. TensorFlow

With Tensorflow 2.0, Pytorch and Tensorflow have a lot more in common as summarized by the tweet by Soumith Chintala (co-creator of Pytorch). These libraries make developing, experimenting, and deploying deep learning algorithms easier.

Different Deep learning algorithms:

Deep learning algorithms are versatile in terms of the type of data that can be used for training. They can be used for both structured data like tables from a database and unstructured data like images, audio and text. These are the basics to learning how to build your own data set.

1. Convolutional Neural Networks (CNNs): CNN consists of deep layers and is widely used for processing and extracting features from images. CNN takes the image as a 2D matrix of pixels and is mostly made up of convolution layers, pooling layers and fully connected layers.

Convolution layers apply filter operations while the pooling layers reduce the dimensions using either Max or Average. Fully connected layer flattens the matrix from pooling layer into a single vector which is then used for identifying the images. CNN's are good for image classification tasks as they perform good feature extraction.

2. Generative Adversarial Networks (GANs): GANs consists of a generator and a discriminator network. The generator creates new data by modifying the existing training data while the discriminator tries to identify the generated data from the real training data. GANs help to recreate images, add special effects to the existing images and create fake images.

3. Recurrent Neural Networks (RNNs): RNNs allow the outputs from previous layers to be used as inputs for the current layer. This makes it good at learning sequences like speech recognition, text and time series analysis as it holds temporal memory. However, it can hold only short term memory.

4. Long Short Term Memory (LSTMs): LSTM Network is an extension of RNN where it basically extends the memory and can retain it over time. It contains forget gates which can remove irrelevant parts of previous layers.

5. Variational Auto-Encoders (VAEs): AutoEncoders consist of an Encoder and a Decoder network which help in learning the latent representations of the input. They are trained to minimize the re-generation error between the input and output data.

6. Deep Belief Network (DBN): DBNs are generative deep learning models made up of multiple layers of hidden units with binary latent variables. Each layer in DBN learns the entire input. They are used with greedy approaches to learning quickly and efficiently.

Conclusion:

Deep learning algorithms and its applications are growing rapidly which makes it exciting to learn and explore. They offer many advantages compared to traditional Machine learning algorithms especially when a lot of data is available. However, there are many concerns over the lack of interpretability of these models as they are based on black-box approaches. With open-source libraries and communities, deep learning algorithms can be implemented easily for various applications.

ABOUT THE AUTHOR

Abhilash Pandurangan is a Master's Student at University of Southern California (USC) and is pursuing MS in Data Science specialization for his graduate degree. He has completed his Bachelor's in Computer Science from SRM Institute of Science and Technology. He has worked on various Data Science and Deep learning related projects during his internships. He has worked as a Machine learning Intern for a startup which focuses on visual search in the fashion industry based in Los Angeles.

Insight Categories

Alumni Speaks

It started off in a more hectic manner than I could expect. ... read more

- Priyanshi Somani, Manipal Institute of Technology

“GAIP is perfectly aligned with someone's goal who wishes to experience an outburst of academic challenges while working on projec ... read more

- Sukriti Shaw, SRM Institute of Science and Technology

“Combining different characters and skillset from different institutes and domains in a new country and fantastic institute, it wa ... read more

- Shaolin Kataria, VIT, Vellore

“An enriching and enthralling experience. The course was extensive but worth every penny. ... read more

- Arudhra Narasimhan V, SASTRA DEEMED TO BE UNIVERSITY

“I personally learned quite a bit here but the 6-month project or LOR aren't as easy to get as was portrayed before. ... read more

- Dwait Bhatt, BITS PILANI

“It was a great experience for me, and far beyond my expectations. ... read more

- Shrikant Tarwani, LNM Institute of Information Technology

“This Internship is the perfect balance of theory and practical application. ... read more

- Mahima Borah, Manipal Institute of Technology

“This Internship has strengthened my concepts on Artificial Intelligence and Deep learning which are the hot words of today’s t ... read more

- Mansi Agarwal, Delhi Technological University